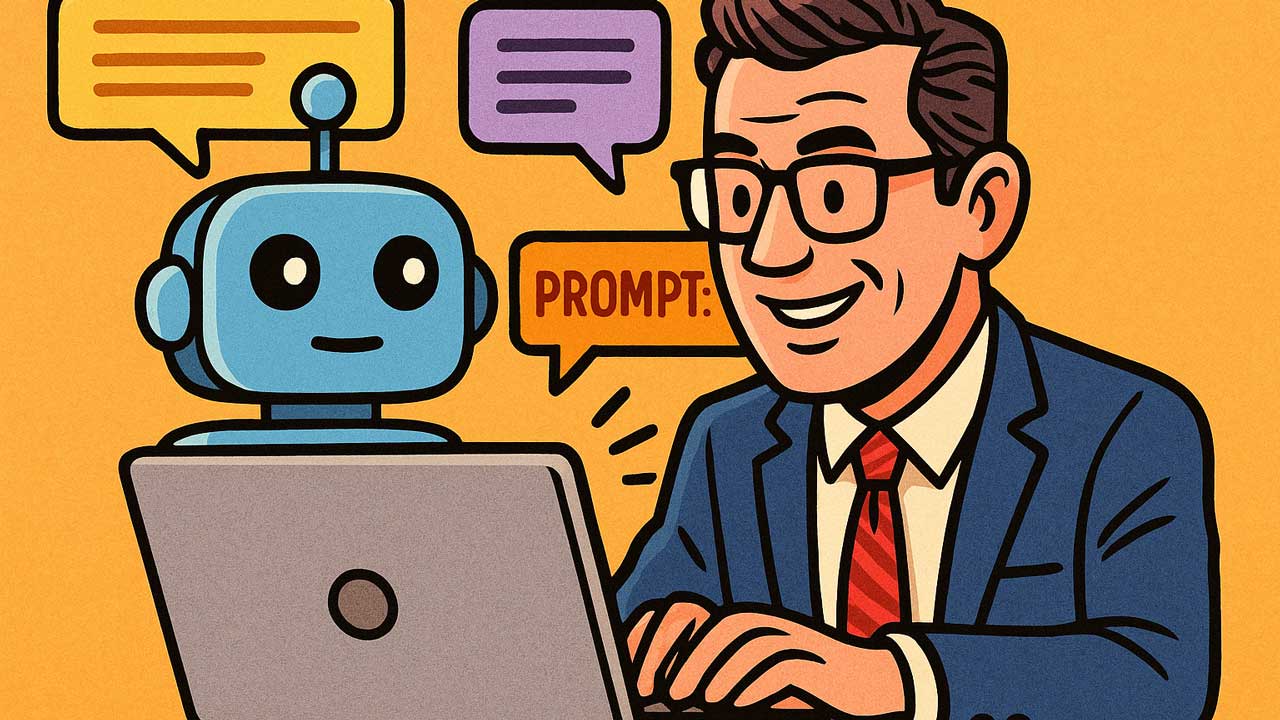

The words we use to control generative AI have become competitive assets. In a recent cookbook entry, OpenAI detailed “meta‑prompting,” a method that uses a more powerful model to write or refine a prompt for another model. Equally important are “pre‑prompts,” system-level instructions that set the model’s identity, tone, and behavior before any user input is received. These may sound like technical nuances, but together, they will shape how enterprises deploy, govern, and scale AI across the organization. Let's explore. Continue Reading →