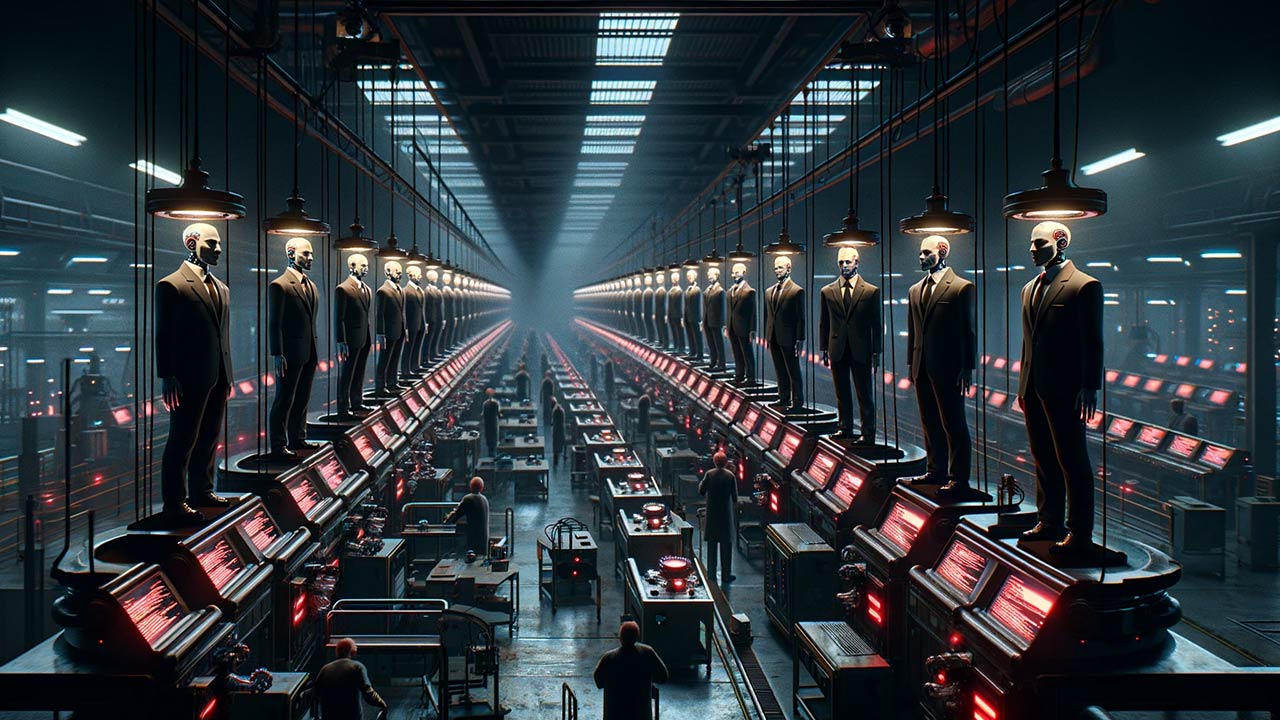

Image created using DALL·E 3 with this prompt: Create an image of a factory churning out AI replicas of political candidates. There is a long assembly line of identical politicians, each representing an AI version meant to do evil. Aspect ratio: 16×9.

As generative AI is being slowly woven into the fabric of our daily lives, its misuse in the political arena has raised significant concerns, particularly as we approach the November elections. To some, recent AI-powered incidents signal an urgent need for legislative action.

AI’s potential to influence elections is undeniable, from generating persuasive campaign materials to orchestrating sophisticated donor-defrauding phishing attacks. A recent use saw a robocall in New Hampshire using a deepfake of President Biden’s voice to urge voters to abstain from voting in the presidential primary, a deceptive tactic traced back to entities operating with alarming impunity.

Politicians are taking note. It’s not clear whether or not an AI-powered campaign can spread enough good news to get you elected, though common sense says it can and will. What is clear is that an AI-powered campaign can super-automate the intentions of extremely bad actors and do enough damage to get you un-elected.

Recognizing the gravity of the situation, organizations like Public Citizen and the Brennan Center for Justice are advocating for stringent regulations. Their efforts are aimed at prompting Congress and federal agencies to enact measures that would curb the malicious use of AI in elections. Proposed legislation, such as the Deceptive Practices and Voter Intimidation Prevention Act, seeks to criminalize the dissemination of misleading information to voters, which is a step in the right direction.

However, the challenge extends beyond domestic boundaries. Adversaries can exploit AI to interfere in elections from afar, rendering national regulations partially effective. This global dimension likely necessitates a coordinated international response to deter AI-facilitated election interference.

What should this look like? Domestic legislation will do nothing to reduce a hostile nation-state from AI-based psyops, and our federal lawmakers and regulators do not seem to have even a cursory understanding of the size and scope of the technological issue. You don’t need to use any big tech platforms to do immense damage; an open source model will do nicely. Congress can’t stop robocallers, so how do they plan to stop deepfakes and industrialized, weaponized misinformation?

Author’s note: This is not a sponsored post. I am the author of this article and it expresses my own opinions. I am not, nor is my company, receiving compensation for it. This work was created with the assistance of various generative AI models.