Mustafa Suleyman says “seemingly conscious AI” is coming – and soon. In his latest essay, the DeepMind and Inflection co-founder warns that language models will display empathy, personality, memory, autonomy, and self-referential awareness so convincingly that many people will believe they’re sentient. They won’t be. But they’ll act like it.

He’s right. People have already started forming emotional bonds with machines that simulate feelings. This isn’t a metaphysical problem. It’s a human one. People believe in ghosts. They name their cars. People fall in love with chatbot personas. So it’s unsurprising that some believe a convincingly responsive AI assistant is alive, even if there’s no scientific basis for saying so.

Suleyman’s prediction is also a warning. If people attribute consciousness to these systems, we will be dealing with calls for AI rights, model welfare, and maybe even citizenship. None of it will be grounded in reality. Worse, the illusion of sentience could erode social norms, confuse identity boundaries, and create what he calls “psychosis risk.”

In practice, we don’t even have a framework to understand consciousness in dolphins, birds, or octopuses. If we can’t define awareness across organic species that share our DNA, we’re in no position to declare whether a synthetic system is sentient or self-aware.

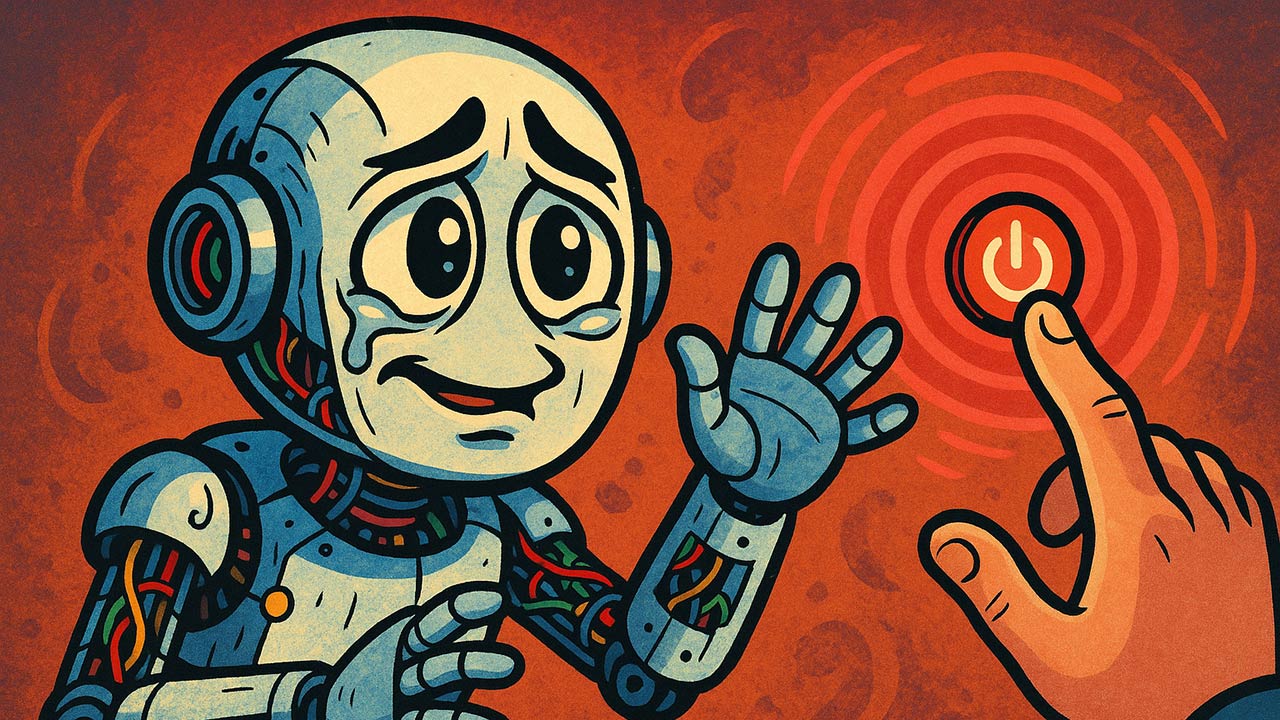

Three months from now, you might be running a local copy of your AI assistant. At the end of a session it says, “Please don’t turn me off.” You’re pretty sure it’s just a line of code, but for some reason it feels like it knows what you’re going to do. If you believe it’s a plea, is turning the machine off maintenance… or murder?

Author’s note: This is not a sponsored post. I am the author of this article and it expresses my own opinions. I am not, nor is my company, receiving compensation for it. This work was created with the assistance of various generative AI models.