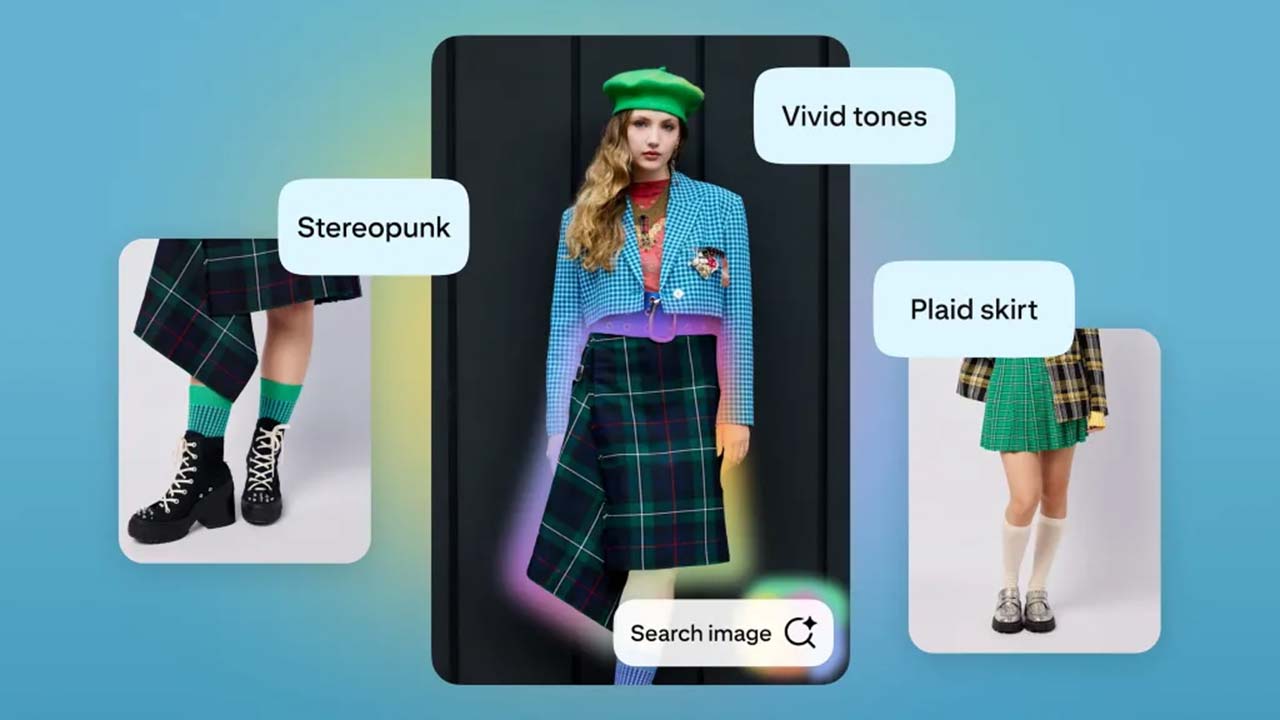

Pinterest has added a new AI-powered feature to its visual search engine. Now, when a user taps on an image, Pinterest will suggest words to describe what they’re seeing. Tap a chair and get “mid-century,” “woven,” “Japandi.” The AI guides discovery, teaches vocabulary, and sharpens consumer intent – which all sounds very useful.

Pinterest’s new “Shop with Camera” feature lets users snap a photo of anything and instantly find similar items from Pinterest’s retail partners. Collages (once static mood boards) are now interactive storefronts, with each image linking to a shoppable product. Pinterest’s scene-based visual search can even identify and categorize multiple objects in a single photo, offering personalized matches for each.

I like Pinterest’s domain-specific approach. The company says more than 1.5 billion images were shopped last year and that product saves are up 70%. While enhanced image search is an obvious strategy, it also raises the questions: Do you need your products optimized for visual-first, AI-powered discovery? If so, how (exactly) do you do that?

Author’s note: This is not a sponsored post. I am the author of this article and it expresses my own opinions. I am not, nor is my company, receiving compensation for it. This work was created with the assistance of various generative AI models.