Anthropic’s Barry Zhang gave a short talk at the AI Engineer Summit called “How We Build Effective Agents.” The talk, which builds on an earlier Anthropic blog post, is the clearest taxonomy I have seen on what an agent actually is, what a workflow actually is, and when each one belongs in production. If you are wondering what your team should be building this quarter, this framing will be extremely helpful.

What is the difference between a task, a workflow, and an agent?

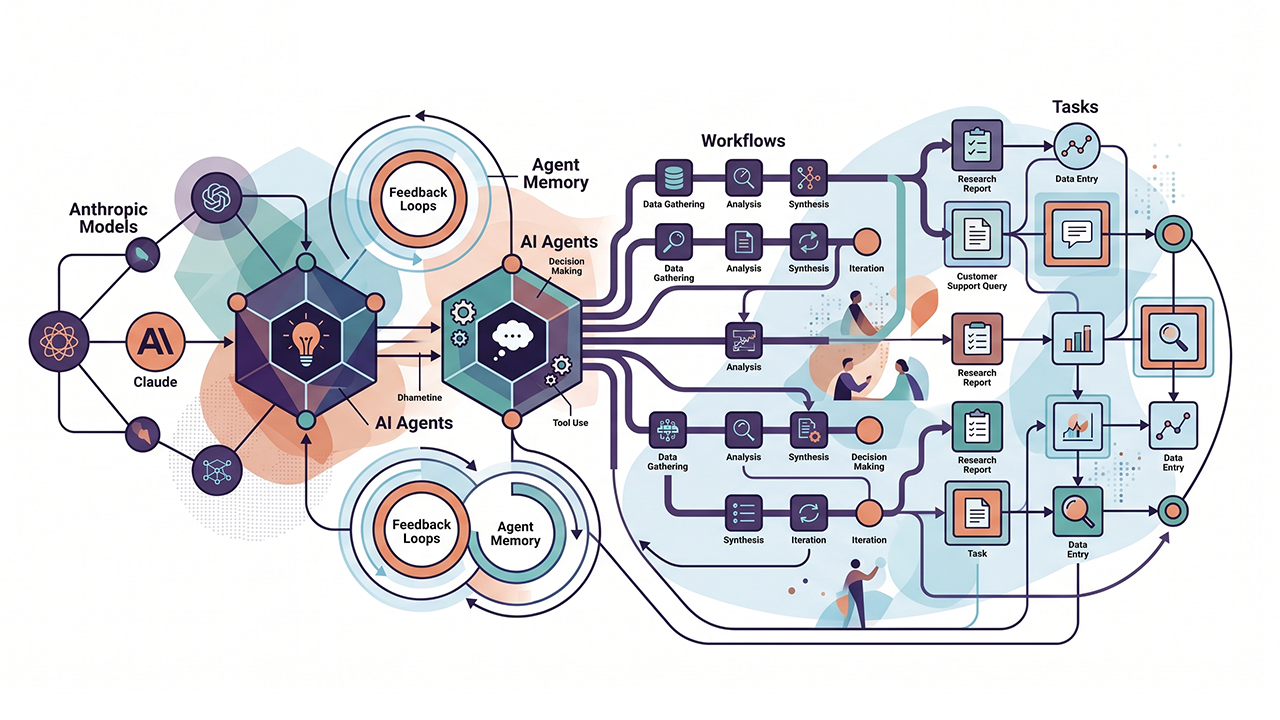

Task – A single model call: Summarize this. Classify that. Extract these fields. Two years ago this felt like magic. Today it is table stakes. The cost is predictable and the failure modes are bounded.

Workflow – Multiple model calls in a predefined control flow. You decide the steps, the model fills them in. Route the customer email to the right queue, draft a response, check the response against policy, then send. The control flow is yours. The intelligence happens at each node.

Agent – A model using tools in a loop, deciding its own trajectory. You give it a goal, a set of tools, and a system prompt. It decides what to do next based on what just happened. The control flow belongs to the model.

With a workflow, you own the plumbing. With an agent, the model owns the plumbing. Every other tradeoff is based on that single structural choice.

When should you build an agent instead of a workflow?

Zhang’s first rule is, “Don’t build agents for everything.” The hype cycle wants you to. Vendors want you to. Resist.

Here’s how to think about it:

Is the task ambiguous enough that you cannot pre-map the decision tree? If you can map it, build it. Optimize each node. You will get more accuracy, more control, and lower cost than any agent will give you.

Is the task valuable enough to justify the token spend? Agents explore, and exploration costs money. Zhang’s rule of thumb: a 10-cent per task budget buys you roughly 30,000 to 50,000 tokens. That is workflow territory. A customer support operation processing one million tickets a month at 5x the necessary token spend burns roughly $1.5M in unnecessary cost per year. Choose wisely.

Are the bottleneck capabilities solid? If the model cannot reliably write code, debug code, and recover from its own errors, do not build a coding agent. Errors compound. Each loop iteration multiplies the failure rate of the weakest link.

What is the cost of error? If a mistake is high-stakes and hard to detect, autonomy becomes a liability. Read-only access and human-in-the-loop are real mitigations. But they also cap how far you can scale.

Coding is a great agent use case because it satisfies these conditions. The task is ambiguous. The output has obvious value. Frontier models are good at it. Unit tests verify the work. That is why agentic coding tools work today and why most other categories are still figuring it out.

Core Components

To simplify this even further, Anthropic thinks of an agent as the environment it operates in, the tools it can call, and the system prompt that defines its goals and constraints. Everything else (caching, parallelization, trajectory inspection) is downstream optimization. You must nail the first three first.

At Your Next Leadership Team Meeting

- Assign someone to audit every internal project labeled “agent” against the checklist to see how many are actually agents and how many are workflows wearing a costume.

- Instrument token economics at p50 and p95 for every production AI workload to see what your agents actually cost to run.

- Stand up trajectory replay and logging before authorizing any new agentic deployment, so you have visibility into what the AI was looking at when something goes wrong.

With these three pieces in place agentic AI is a forcing function for organizational maturity.

Labels Matter

The vocabulary of AI is a mess. Prompt, agent, skill, agentic workflow, AI assistant, and claw all mean different things to different people, and most leadership teams use them interchangeably. George Bernard Shaw wrote that “all professions are conspiracies against the laity.” The AI industry’s loose vocabulary is a conspiracy against itself.

Labels matter because architecture follows them. Force every AI initiative to declare itself as a task, a workflow, or an agent. Get the label wrong and you are paying for an agent when a workflow would have done the job. Get the label right and the cost, governance, and accountability questions answer themselves.

Every company needs a Claw strategy. Do you have one?

Author’s note: This is not a sponsored post. I am the author of this article and it expresses my own opinions. I am not, nor is my company, receiving compensation for it. This work was created with the assistance of various generative AI models.