Last Sunday I published an article about getting AI to write in my voice. Our workflow included voice profiles, editorial validators, style enforcement, the works. This week the system broke. Same code, same prompts, different model version. The workflow that produced polished drafts on Monday produced noticeably worse ones by Thursday. Nothing in our codebase changed. The model underneath it did.

If you are beginning to deploy agentic workflows at scale, this is a cautionary tale with a technical explanation and a practical fix.

A Purpose-Built Benchmark

AI model builders benchmark each other using standardized tests: MMLU for reasoning and knowledge, GPQA for graduate-level science, ARC-AGI for abstract problem-solving. These scores are great for bragging rights, but they do not help me understand whether a given model can be taught to write a coherent paragraph in my voice (or your brand voice). So two years ago, I built my own benchmark. It’s a purpose-built agentic workflow designed to produce prose indistinguishable from my published writing. Scoring AI-generated prose is subjective. That’s the point. I wanted to test the workflows against my judgement.

What Happened

When Anthropic’s Claude Opus 4.5 debuted, it was the strongest prose writer available. We added the model to our blog-writing test workflow. Three thousand lines of code. Voice profiles extracted from hundreds of published posts. Forty-seven prohibition rules (“never use em dashes,” “never use contrastive constructions,” “never use ‘delve'”). Three editorial validators. Fifty-two unit tests. Every test passed.

When Opus 4.6 shipped on February 5, the system that reliably produced publishable drafts on 4.5 started producing drafts that read like they were written by a different author. Which, in a sense, they were.

Why the “.1” Is Misleading

The version number suggests a point release. The behavioral changes are generational. Opus 4.6’s ARC-AGI-2 benchmark score nearly doubled, from 37.6% to 68.8%, the largest single-generation leap in abstract reasoning any lab has reported. The context window jumped from 200,000 tokens to a million. The model makes stronger autonomous creative decisions and adapts its reasoning depth dynamically based on task complexity.

A “.1” increment hides a massive leap in capability. And capability changes how a model responds to every instruction you have ever written for it.

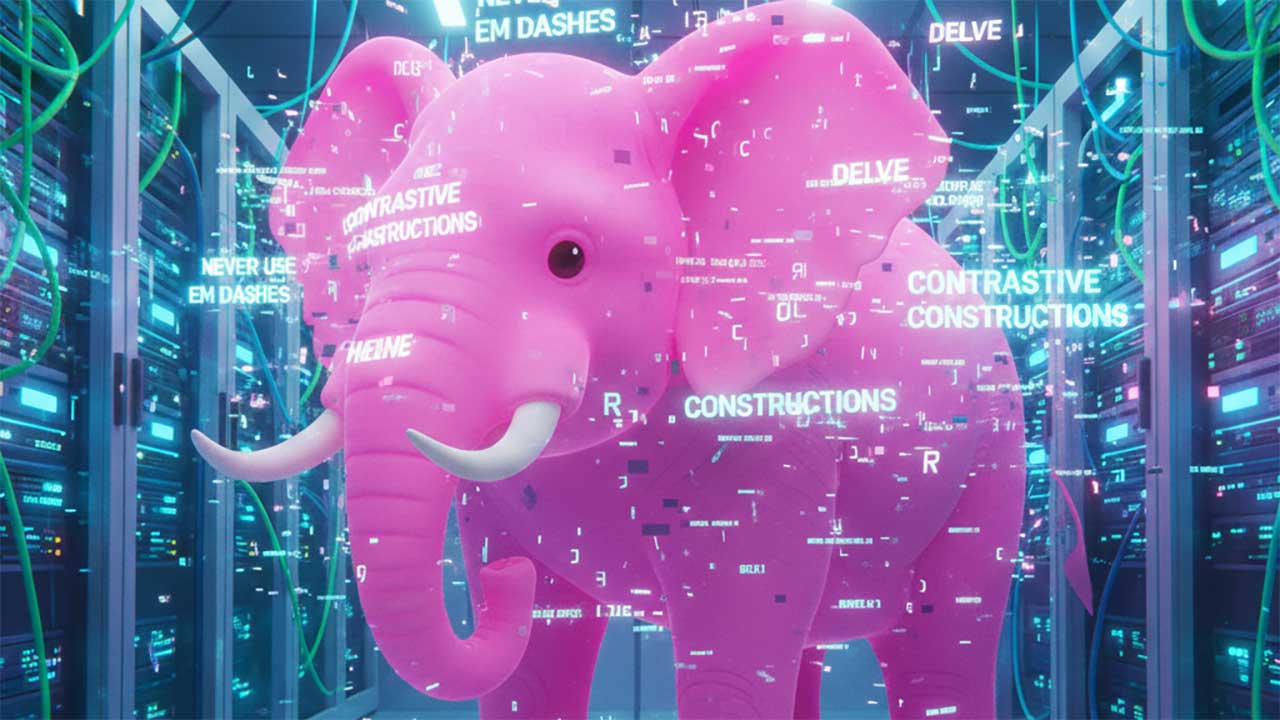

The Pink Elephant Problem

Our 47 prohibition rules (“never write X”) worked on 4.5. The model followed orders. On 4.6, the same prohibitions activated the very patterns they were designed to suppress.

Researchers call this the Pink Elephant Problem. A 2024 paper by Biderman et al. (arXiv:2402.07896) demonstrated that instructing an LLM to avoid a specific concept (a “Pink Elephant”) often produces the opposite result, because attention-based architectures process the forbidden concept in order to suppress it. Related work by Hwang et al. (arXiv:2404.15154) showed that image generation models exhibit the same vulnerability: telling DALL-E3 to exclude pink elephants produces more of them.

The more capable the model, the more deeply it processes the constraint, and the more likely it is to produce exactly what you told it to avoid. Anthropic’s own 4.6 prompt guide makes the point directly: tell the model what to do, not what to avoid. Our 47 prohibition rules were a 47-item menu of mistakes the model was primed to make.

The Fix

I stripped out the rules. Replaced prohibition lists with published posts as voice exemplars, showing the model what good looks like through few-shot examples. Then I moved the writing step into a clean sub-agent context (roughly 7,000 tokens of writing-relevant content instead of 28,000 tokens of software engineering overhead competing for the model’s attention). Then I went old school: post-generation mechanical enforcement using simple regex, applied after the model writes freely. The system generates, then enforcement happens afterwards. This is more or less the same way human copywriters work with human editors and human proofreaders.

Our solution required three things. First, behavioral regression test suites to evaluate whether the output still meets our quality standards. Going forward we will run model fitness evaluations on a weekly cadence (mission-critical workflows would require continuous monitoring). Second, human accountability: each agentic workflow now has a named owner responsible for monitoring drift. Third, modular architecture with clean separation between business logic and model-specific tuning, so that every critical workflow can be refactored without starting from scratch. Doing all of this took less time than I thought it would. (Vibe-coding is life-changing.)

The Practical Lesson

If you are deploying agentic workflows, plan for model drift. Changes compound. A subtle change in how the model interprets a system prompt will cascade through the entire chain. If that chain touches customer communications, compliance language, pricing logic, or investor messaging, drift can become a serious business risk. Audit your agentic workflows to ensure that when models drift or improve, you can easily adapt.

Model Garden Madness

There’s a bit more to the story. While the Claude Opus-focused agentic workflow in our blog-writing benchmark system ceased to function as designed, the exact same workflow continued to function perfectly with OpenAI’s GPT-5 as well as Google’s Gemini. Recent changes to those models had no demonstrable impact on the outputs of our blog-writing workflows. Model monoculture concentrates risk, multi-model architectures distribute it.

The Half-Life of Optimization

It is embarrassing to publish a workflow tutorial that becomes obsolete in seven days. But it is also reassuring. Our internal controls surfaced the drift, and our culture of continuous adaptation allowed us to quickly refactor the code. This week served as a reminder that the goal isn’t to build systems that last forever, it’s to build systems that can be rebuilt in an afternoon.

Author’s note: This is not a sponsored post. I am the author of this article and it expresses my own opinions. I am not, nor is my company, receiving compensation for it. This work was created with the assistance of various generative AI models.